Ferdy Flip Architecture

We mostly build on DN

It took me a ridiculously long time to get around to writing this article, it’s been a draft in my inbox forever. But I really wanted to finish it before I go and complicate it all up even more by launching a subnet. So here we go.

Overview

Web3 apps generally require three types of components.

Frontend

Obviously no one wants to be gambling by connecting to a smart contract on Snowtrace, so we have a fancy UI located at https://www.ferdyflip.xyz that people can use instead.

The insanely talented VirtualQuery@ handles this.

Smart Contract

To do web3 stuff you need at least one smart contract. Ferdy Flip has quite a few actually; there are a few ‘core’ parts that don’t change often (the Treasury, the ReferralManager) and then we have individual games that get new versions released relatively frequently, basically whenever we support a new feature across all our games.

Although some smart contracts are upgradable, ours are not. Instead, when we add a new feature, we release the new contract, adjust the front to point to it, and the backend to monitor it.

World-renowned poop artist 0xSmitty@ and I share this.

Backend

Storing and accessing EVM data costs gas as a way to incentivize you to not put load on the network. As a result, many traditional data access patterns aren’t supported by smart contracts.

As a workaround, our contracts emit ‘events’ that can be monitored. We track those events, reprocess them, and store them in a more traditional backend storage system (a database). You might hear this process described as ‘indexing’.

I mostly handle this.

The frontend

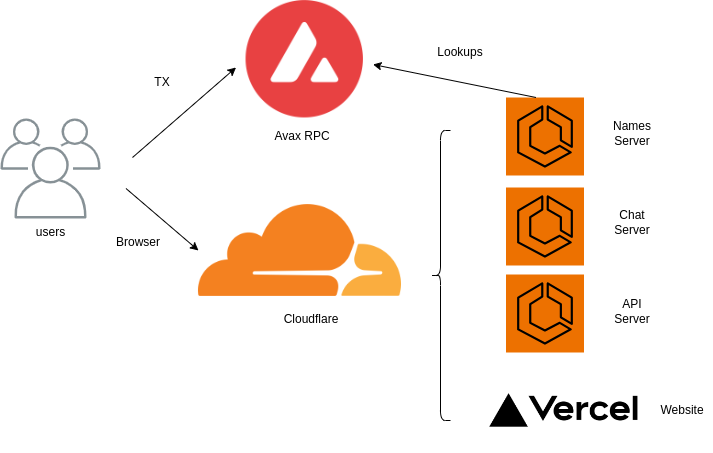

The UI is a pretty standard NextJs app using wagmi + Ethers.js for web3 stuff. Like literally all web3 apps it’s hosted on Vercel, with caching provide by Cloudflare.

The UI interacts with several different types of backend:

An Avalanche RPC node, which serves the Web3 requests for data, and where transactions are sent. Avalabs provides an amazing public RPC endpoint, much better than literally any other chain. For Base, we have to use a QuickNode endpoint because the public ones are just so incredibly shitty.

The FerdyFlip API server. This provides historical information on games that were played, player information, and aggregated statistics.

The Name Resolver server. This is an in-house server that resolves user addresses to display names, including Avvy domains (.avax), Mambo Names (.mambo), and Ferdy Tags.

The Chat server. This powers the ferdybox chat widget at the side of the UI.

The smart contracts

Currently we have 10 games, so we have 10 active game smart contracts. The games all follow a very similar pattern for storing data, transfering referral payments, and interacting with the treasury.

For the typical game:

Funds are transferred in with the specs of the gamble (heads/tails, red/black, under 70 etc).

The details of the bet are validated.

The rake and referral are split off.

The remainder is transferred to the treasury while the game is pending.

A VRF request is triggered.

Several blocks later, a VRF response provides randomness for the game.

The game win/tie/loss is determined and funds are potentially transferred from the treasury to the player.

PvP games have a slightly different flow because they can be canceled, and there’s (at least) a second player who has to join.

At every step, events are emitted that describe the actions that are taking place. Those events are monitored by the frontend to determine what to show the user, and by the backend for record keeping.

The backend

Over time, we have accumulated an enormous number of backend systems.

Discord monitoring bot. This keeps track of our Link and Avax balances for our VRF Fulfillment. For some godforsaken reason, it’s running on an EC2 machine that also hosts the Raffllrr indexer.

Names Resolver server.

Chat server.

API server. This is a PostgREST interface to our database. Instead of maintaining a separate API server that is handcrafted, we just expose our database tables and views directly. More complicated aggregations are exposed as database functions.

The ‘dws’ database. Currently this is an AWS RDS Aurora PostgreSQL database configured in Serverless mode (our loads are very burst-y).

Four instances of the ‘dws-indexer’ server; one each for Avax, Fuji, Base, Base Goerli respectively.

Two instances of the ‘ferdyflip-vrf’ server for each chain, a total of 8 instances. For each chain, one is configured in ‘serve immediately’ mode, and one is a ‘fallback’ fulfillment that hopefully should never trigger (but occasionally does).

Excluding the database (RDS) and the wacko monitoring bot (EC2), all of our services are running on Amazon’s Elastic Container Service. Containerized services are SIGNIFICANTLY easier to build, deploy, manage, and reason about. If you’re not DevOps-pilled yet, you should be.

Analytics

We do most of the Ferdy Flip analytics on the fly, as they are requested. There are not enough rows in the database for us to worry about, particularly after I migrated from the former ‘expanded’ format (one row per round in a game) to a the collapsed version (one row per game).

But there are a few heavyweight queries that are frequently referenced enough to warrant precomputing. We have an hourly cron job that refreshes a materialized view for those.

Other bits and bobs

I mostly covered the high level concepts, but there are a ton of tiny architecture pieces that don’t display nicely. I’ll list a few here:

Elastic Load Balancing (shipping requests to the API, naming, chat servers)

Certificate Manager (AWS issued certs that terminate at the load balancer)

Fargate (how we’re deploying on ECS)

Security groups (limiting access to/from load balancers, databases, and servers)

Probably the thing I struggled most with was setting security boundaries around resources appropriately.

Costs

Deploying all our smart contracts from scratch costs about $50 in gas (as long as you remember to adjust the default gas on Base down, that was an expensive lesson to learn).

Vercel + Cloudflare will runs about $50 a month. A domain name is about $20 a year. We pay $50 a month for QuickNode.

AWS costs can vary enormously depending on what you’re doing, and how well you do it. Our costs for Ferdy Flip are about $150 a month, mainly because we optimized for safety and sanity over minimal spend.

We share that account with Avalytics though… and THAT is expensive (around $500 a month). So the lesson is to only run services that make money, because they’re cheaper and they also make you money.

The end

You might have noticed a lot more detail about the backend. That’s because I’m a backend engineer.

If you want more details about the frontend, bug VQ.

An upcoming post will have details about how to set up a testnet / mainnet for an Avax Subnet (once I finish figuring it out).

this was a fantastic write up!

as someone who only really engaged with contracts as a end user and a bit on the Dune side of things, this was a neat peak behind the proverbial curtains.

gg